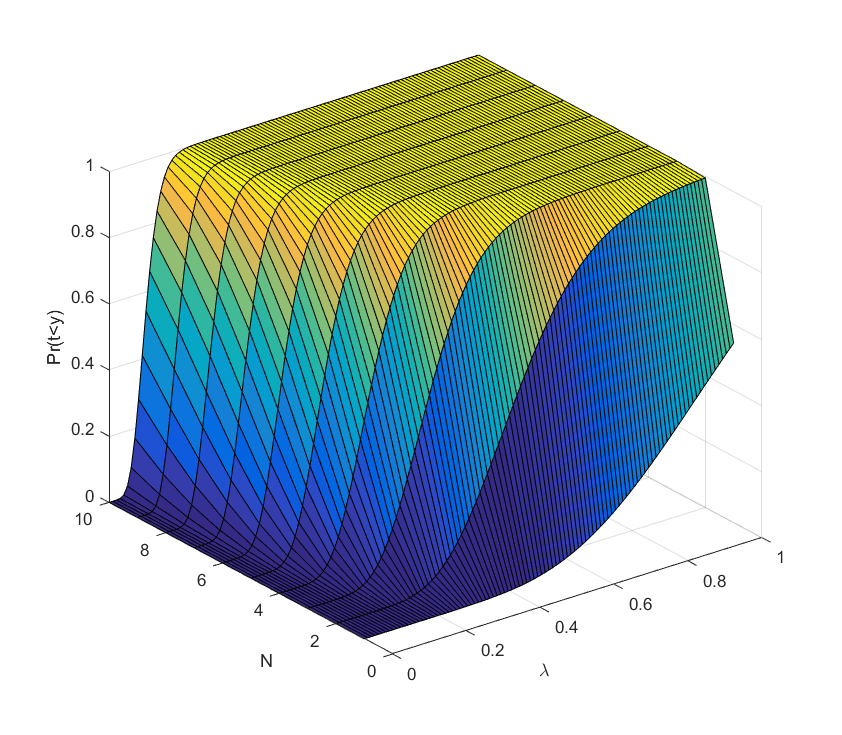

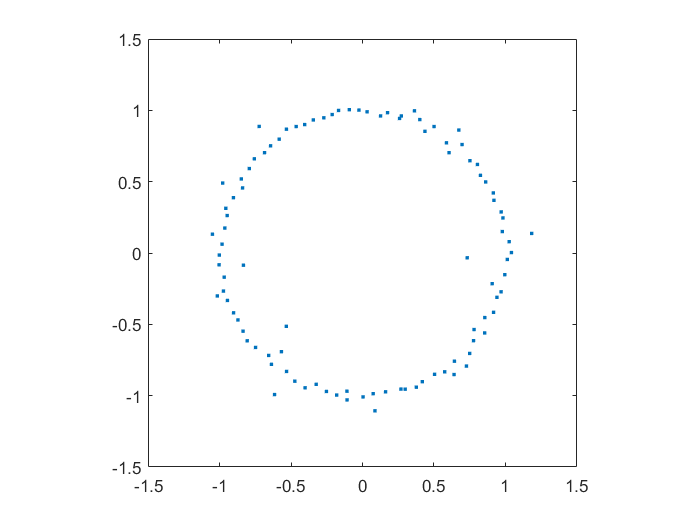

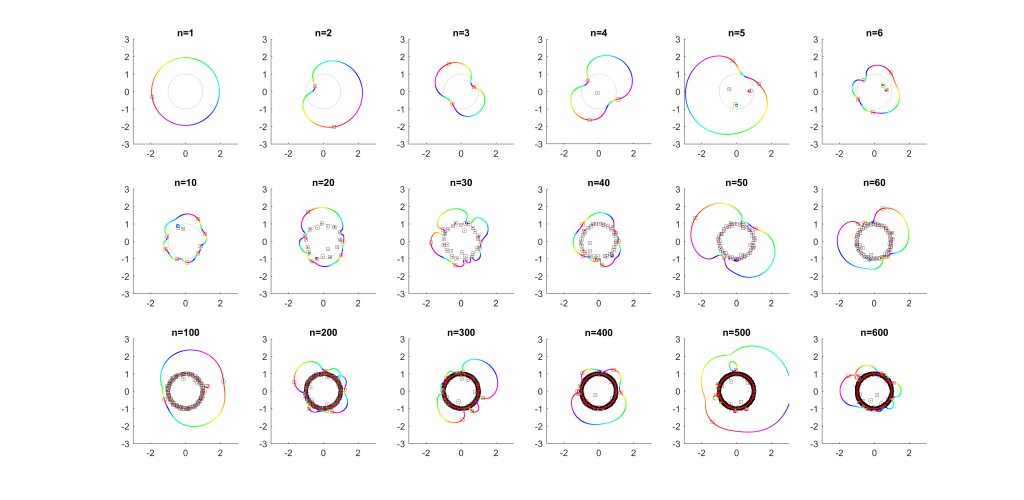

Playing with Matlab, I plotted the location of the zeros of a polynomial with normally distributed coefficients in the complex plane. It was nearly a circle:

This did not surprise me that much, since I have already toyed with the distribution of zeros of polynomials with coefficients in {-1,0,+1}, producing some neat distributions close to the unit circle (see also John Baez). A quick google found (Hughes & Nikeghbali 2008): under very general circumstances polynomial zeros tend towards the unit circle. One can heuristically motivate it in a lot of ways.

As you add more and more terms to the polynomial the zeros approach the unit circle. Each new term perturbs them a bit: at first they move around a lot as the degree goes up, but they soon stabilize into robust positions (“young” zeros move more than “old” zeros). This seems to be true regardless of whether the coefficients set in “little-endian” or “big-endian” fashion.

But then I decided to move things around: what if the coefficient on the leading term changed? How would the zeros move? I looked at the polynomial where

were from some suitable random sequence and

could run around

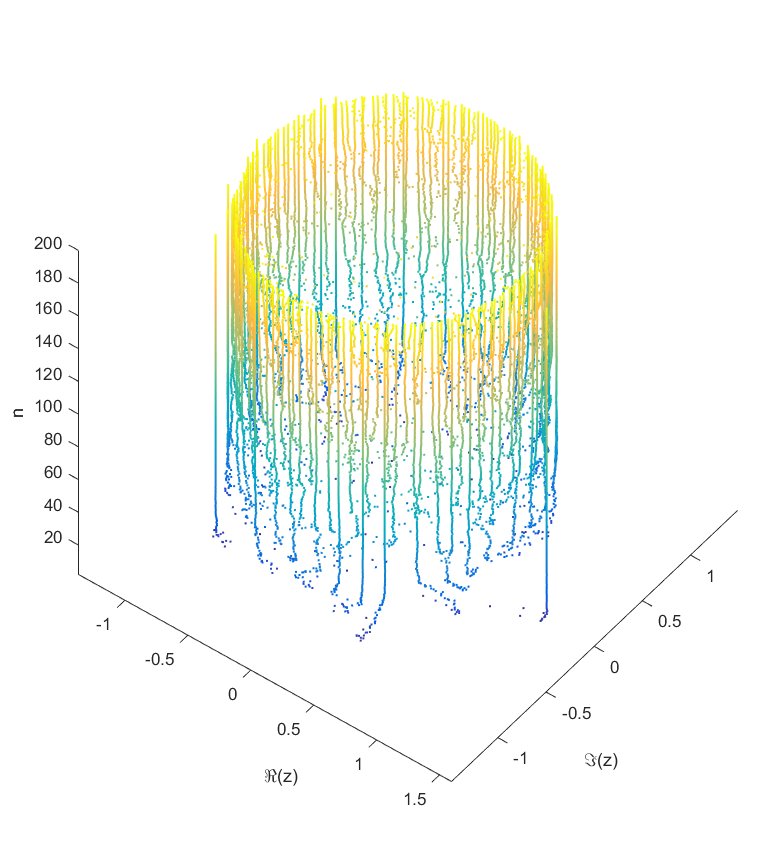

. Since the leading coefficient would start and end up back at 1, I knew all zeros would return to their starting position. But in between, would they jump around discontinuously or follow orderly paths?

Continuity is actually guaranteed, as shown by (Harris & Martin 1987). As you change the coefficients continuously, the zeros vary continuously too. In fact, for polynomials without multiple zeros, the zeros vary analytically with the coefficients.

As runs from 0 to

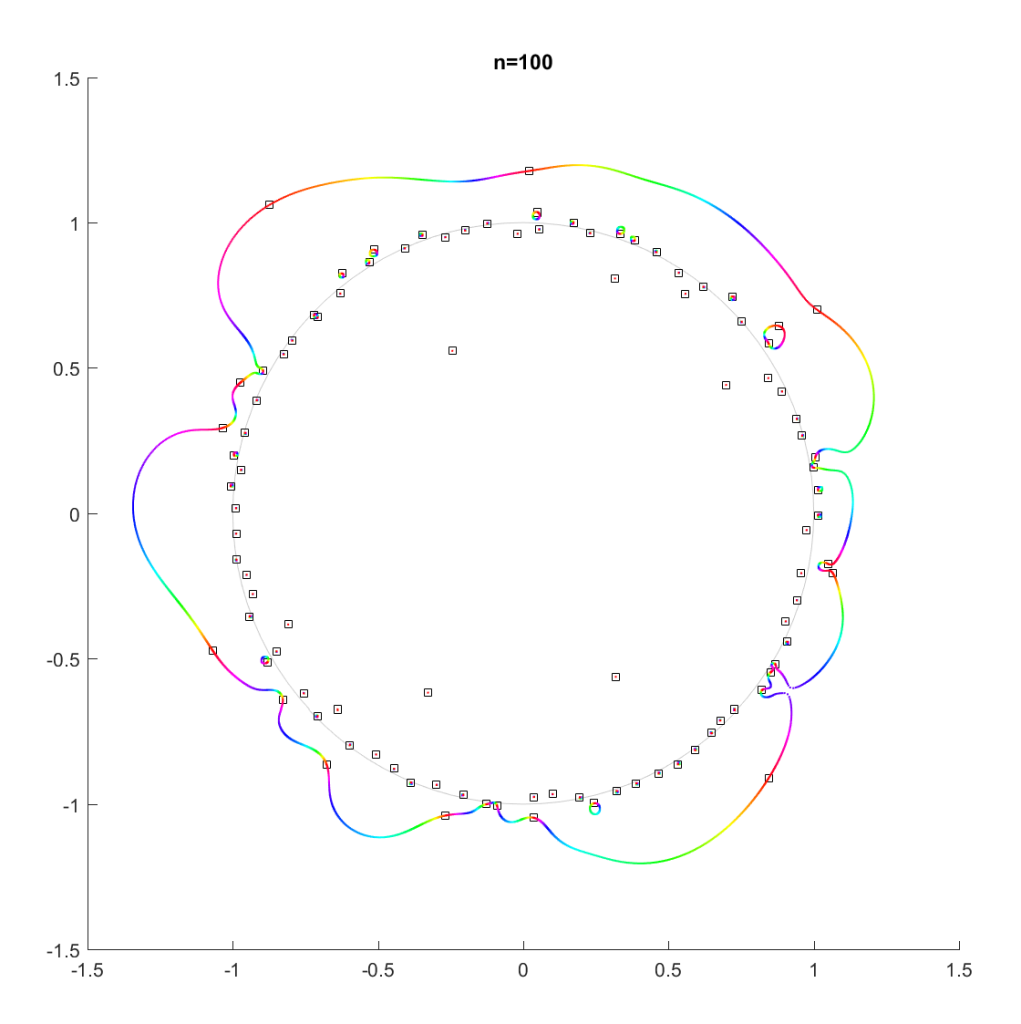

the roots move along different orbits. Some end up permuted with each other.

For low degrees, most zeros participate in a large cycle. Then more and more zeros emerge inside the unit circle and stay mostly fixed as the polynomial changes. As the degree increases they congregate towards the unit circle, while at least one large cycle wraps most of them, often making snaking detours into the zeros near the unit circle and then broad bows outside it.

In the above example, there is a 21-cycle, as well as a 2-cycle around 2 o’clock. The other zeros stay mostly put.

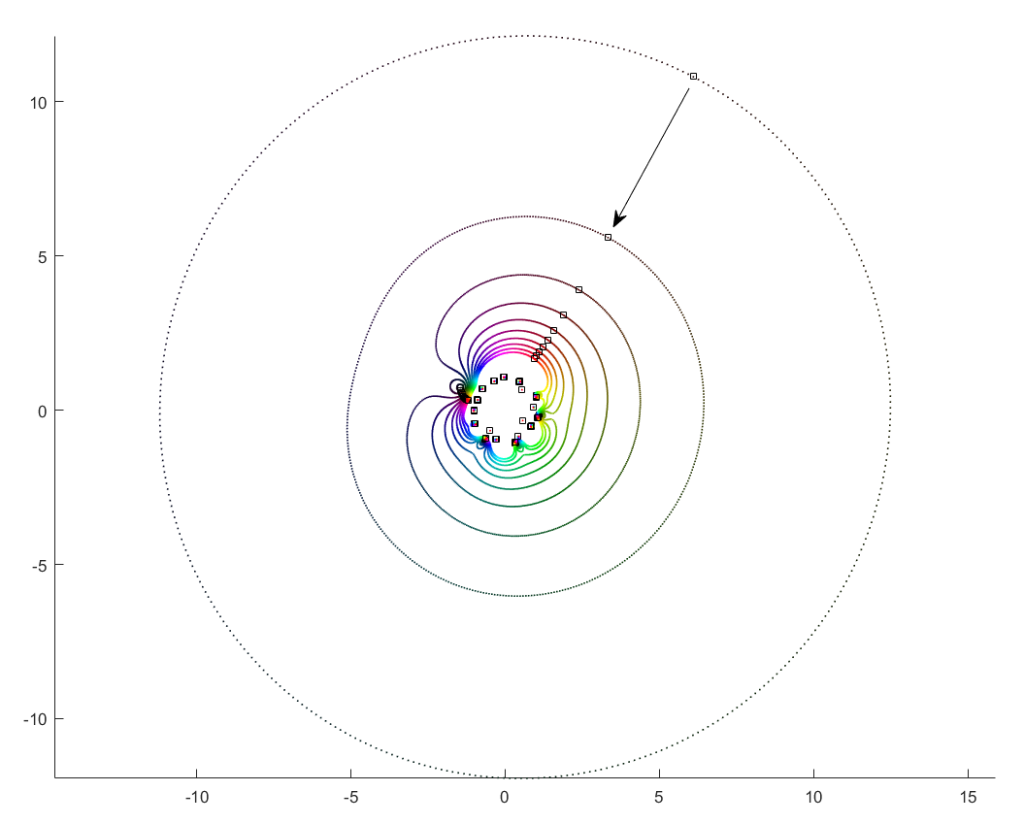

The real question is what determines the cycles? To understand that, we need to change not just the argument but the magnitude of .

What happens if we slowly increase the magnitude of the leading term, letting for a r that increases from zero? It turns out that a new zero of the function zooms in from infinity towards the unit circle. A way of seeing this is to look at the polynomial as

: the second term is nonzero and large in most places, so if

is small the

factor must be large (and opposite) to outweigh it and cause a zero. The exception is of course close to the zeros of

, where the perturbation just moves them a tiny bit: there is a counterpart for each of the

zeros of

among the zeros of

. While the new root is approaching from outside, if we play with

it will make a turn around the other zeros: it is alone in its orbit, which also encapsulates all the other zeros. Eventually it will start interacting with them, though.

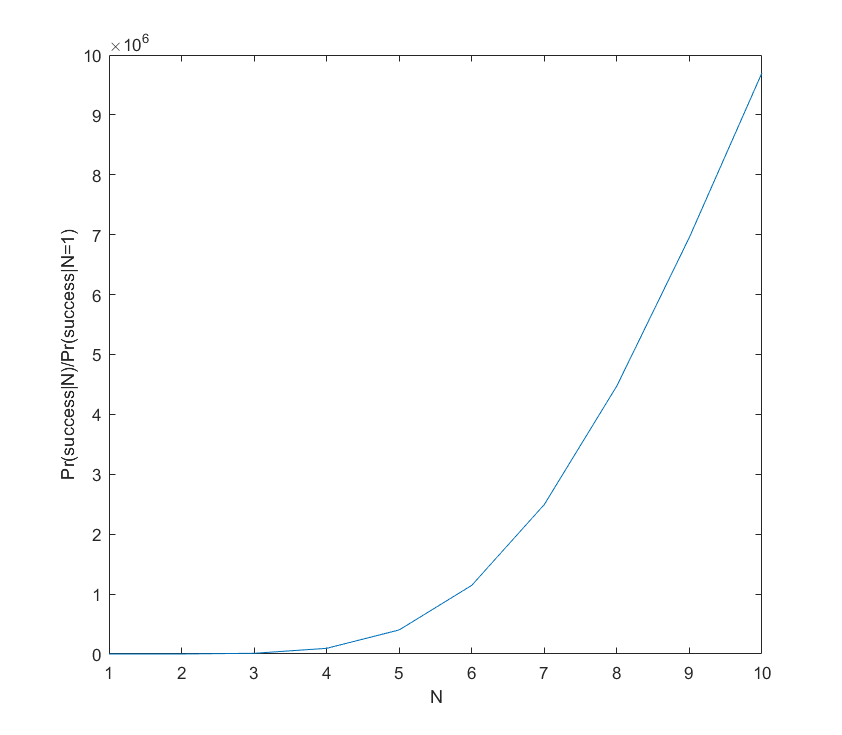

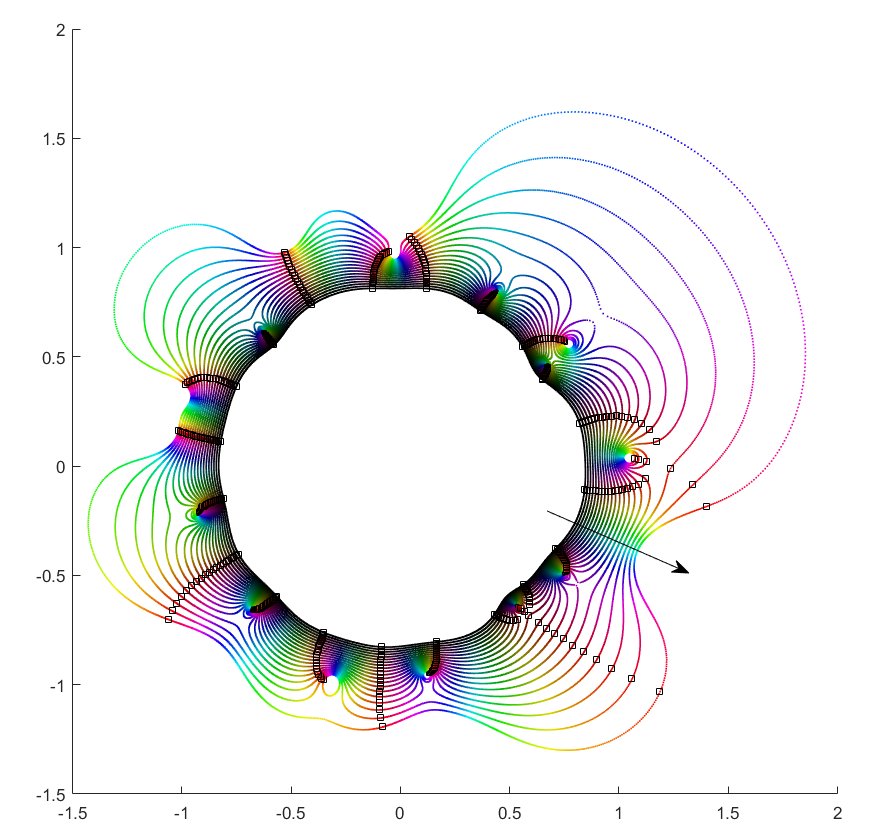

If you instead start out with a large leading term, , then the polynomial is essentially

and the zeros the n-th roots of

. All zeros belong to the same roughly circular orbit, moving together as

makes a rotation. But as

decreases the shared orbit develops bulges and dents, and some zeros pinch off from it into their own small circles. When does the pinching off happen? That corresponds to when two zeros coincide during the orbit: one continues on the big orbit, the other one settles down to be local. This is the one case where the analyticity of how they move depending on

breaks down. They still move continuously, but there is a sharp turn in their movement direction. Eventually we end up in the small term case, with a single zero on a large radius orbit as

.

This pinching off scenario also suggests why it is rare to find shared orbits in general: they occur if two zeros coincide but with others in between them (e.g. if we number them along the orbit, , with

to

separate). That requires a large pinch in the orbit, but since it is overall pretty convex and circle-like this is unlikely.

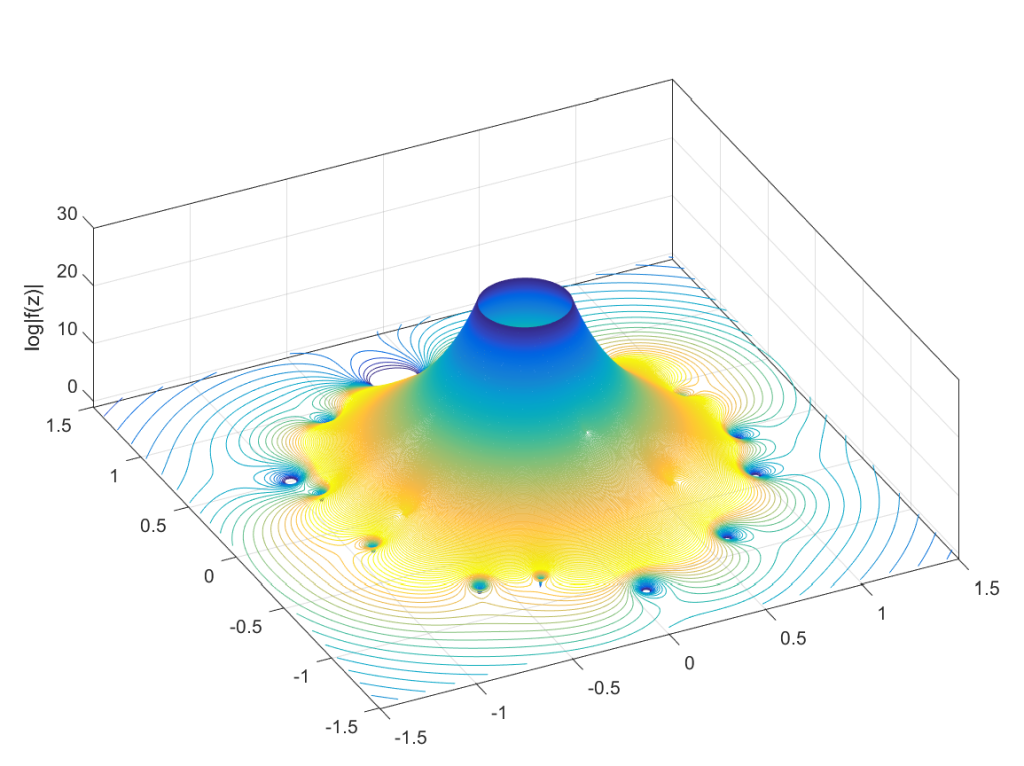

Allowing to run from

to 0 and

over

would cover the entire complex plane (except maybe the origin): for each z, there is some

where

. This is fairly obviously

. This function has a central pole, surrounded by zeros corresponding to the zeros of

. The orbits we have drawn above correspond to level sets

, and the pinching off to saddle points of this surface. To get a multi-zero orbit several zeros need to be close together enough to cause a broad valley.

There you have it, a rough theory of dancing zeros.