I blog at Practical Ethics on Kuwait’s mandatory DNA handover law, and why it does not work ethically.

Biotechnology

A clean well-lighted challenge: those eyes

On Extropy-chat my friend Spike suggested a fun writing challenge:

“So now I have a challenge for you. Write a Hemmingway-esque story (or a you-esque story if you are better than Papa) which will teach me something, anything. The Hemmingway story has memorable qualities, but taught me nada. I am looking for a short story that is memorable and instructive, on any subject that interests you. Since there is so much to learn in this tragically short life, the shorter the story the better, but it should create memorable images like Hemmingway’s Clean, it must teach me something, anything. “

Here is my first attempt. (V 1.1, slightly improved from my list post and with some links). References and comments below.

Those eyes

“Customers!”

“Ah, yes, customers.”

“Cannot live with them, cannot live without them.”

“So, who?”

“The optics guys.”

“Those are the worst.”

“I thought that was the security guys.”

“Maybe. What’s the deal?”

“Antireflective coatings. Dirt repelling.”

“That doesn’t sound too bad.”

“Some of the bots need to have diffraction spread, some should not. Ideally determined just when hatching.”

“Hatching? Self-assembling bots?”

“Yes. Can not do proper square root index matching in those. No global coordination.”

“Crawly bugbots?”

“Yes. Do not even think about what they want them for.”

“I was thinking of insect eyes.”

“No. The design is not faceted. The optics people have some other kind of sensor.”

“Have you seen reflections from insect eyes?”

“If you shine a flashlight in the garden at night you can see jumping spiders looking back at you.”

“That’s their tapeta, like a cat’s. I was talking about reflections from the surface.”

“I have not looked, to be honest.”

“There aren’t any glints when light glance across fly eyes. And dirt doesn’t stick.”

“They polish them a lot.”

“Sure. Anyway, they have nipples on their eyes.”

“Nipples?”

“Nipple like nanostructures. A whole field of them on the cornea.”

“Ah, lotus coatings. Superhydrophobic. But now you get diffraction and diffraction glints.”

“Not if they are sufficiently randomly distributed.”

“It needs to be an even density. Some kind of Penrose pattern.”

“That needs global coordination. Think Turing pattern instead.”

“Some kind of tape?”

“That’s Turing machine. This is his last work from ’52, computational biology.”

“Never heard of it.”

“It uses two diffusing signal substances: one that stimulates production of itself and an inhibitor, and the inhibitor diffuses further.”

“So a blob of the first will be self-supporting, but have a moat where other blobs cannot form.”

“Yep. That is the classic case. It all depends on the parameters: spots, zebra stripes, labyrinths, even moving leopard spots and oscillating modes.”

“All generated by local rules.”

“You see them all over the place.”

“Insect corneas?”

“Yes. Some Russians catalogued the patterns on insect eyes. They got the entire Turing catalogue.”

“Changing the parameters slightly presumably changes the pattern?”

“Indeed. You can shift from hexagonal nipples to disordered nipples to stripes or labyrinths, and even over to dimples.”

“Local interaction, parameters easy to change during development or even after, variable optics effects.”

“Stripes or hexagons would do diffraction spread for the bots.”

“Bingo.”

References and comments

My old notes on models of development for a course, with a section on Turing patterns. There are many far better introductions, of course.

Nanostructured chitin can do amazing optics stuff, like the wings of the Morpho butterfly: P. Vukusic, J.R. Sambles, C.R. Lawrence, and R.J. Wootton (1999). “Quantified interference and diffraction in single Morpho butterfly scales“. Proceedings of the Royal Society B 266 (1427): 1403–11.

Another cool example of insect nano-optics: Land, M. F., Horwood, J., Lim, M. L., & Li, D. (2007). Optics of the ultraviolet reflecting scales of a jumping spider. Proceedings of the Royal Society of London B: Biological Sciences, 274(1618), 1583-1589.

One point Blagodatski et al. make is that the different eye patterns are scattered all over the insect phylogenetic tree: since it is easy to change parameters one can get whatever surface is needed by just turning a few genetic knobs (for example in snake skins or number of digits in mammals). I found a local paper looking at figuring out phylogenies based on maximum likelihood inference from pattern settings. While that paper was pretty optimistic on being able to figure out phylogenies this way, I suspect the Blagodatski paper shows that they can change so quickly that this will only be applicable to closely related species.

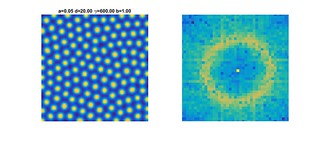

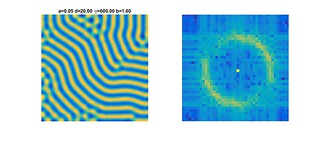

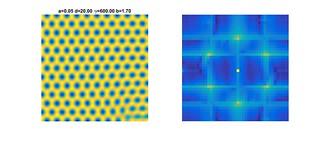

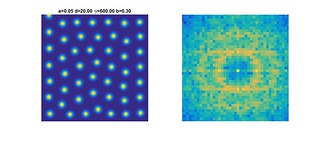

It is fun to look at how the Fourier transform changes as the parameters of the pattern change:

In this case I move the parameter b up from a low value to a higher one. At first I get “leopard spots” that divide and repel each other (very fun to watch), arraying themselves to fit within the boundary. This produces the vertical and horizontal stripes in the Fourier transform. As b increases the spots form a more random array, and there is no particular direction favoured in the transform: there is just an annulus around the center, representing the typical inter-blob distance. As b increases more, the blobs merge into stripes. For these parameters they snake around a bit, producing an annulus of uneven intensity. At higher values they merge into a honeycomb, and now the annulus collapses to six peaks (plus artefacts from the low resolution).

Brewing bad policy

The New York Times reports that yeast has been modified to make THC. The paper describing the method uses precursor molecules, so it is not a hugely radical step forward. Still, it dovetails nicely with the recent paper in Science about full biosynthesis of opiates from sugar (still not very efficient compared to plants, though). Already this spring there was a comment piece in Nature about how to regulate the possibly imminent drug microbrewing, which I commented on at Practical Ethics.

The New York Times reports that yeast has been modified to make THC. The paper describing the method uses precursor molecules, so it is not a hugely radical step forward. Still, it dovetails nicely with the recent paper in Science about full biosynthesis of opiates from sugar (still not very efficient compared to plants, though). Already this spring there was a comment piece in Nature about how to regulate the possibly imminent drug microbrewing, which I commented on at Practical Ethics.

Rob Carlsson has an excellent commentary on the problems with the regulatory reflex about new technology. He is basically arguing a “first, do no harm” principle for technology policy.

Policy conversations at all levels regularly make these same mistakes, and the arguments are nearly uniform in structure. “Here is something we don’t know about, or are uncertain about, and it might be bad – really, really bad – so we should most certainly prepare policy options to prevent the hypothetical worst!” Exclamation points are usually just implied throughout, but they are there nonetheless. The policy options almost always involve regulation and restriction of a technology or process that can be construed as threatening, usually with little or no consideration of what that threatening thing might plausibly grow into, nor of how similar regulatory efforts have fared historically.

This is such a common conversation that in many fields like AI even bringing up that there might be a problem makes practitioners think you are planning to invoke regulation. It fits with the hyperbolic tendency of many domains. For the record, if there is one thing we in the AI safety research community agree on, it is that more research is needed before we can give sensible policy recommendations.

Figuring out what policies can work requires understanding both what the domain actually is about (including what it can actually do, what it likely will be able to do one day, and what it cannot do), how different policy options have actually worked in the past, and what policy options actually exist in policy-making. This requires a fair bit of interdisciplinary work between researchers and policy professionals. Clearly we need more forums where this can happen.

And yes, even existential risks need to be handled carefully like this. If their importance overshadows everything, then getting policies that actually reduce the risk is a top priority: dramatic, fast policies doesn’t guarantee working risk reduction, and once a policy is in place it is hard to shift. For most low-probability threats we do not gain much survival by rushing policies into place compared to getting better policies.

The limits of the in vitro burger

Stepping on toes everywhere in our circles, Ben Levinstein and me have a post at Practical Ethics about the limitations of in vitro meat for reducing animal suffering.

Stepping on toes everywhere in our circles, Ben Levinstein and me have a post at Practical Ethics about the limitations of in vitro meat for reducing animal suffering.

The basic argument is that while factory farming produces a lot of suffering, a post-industrial world would likely have very few lives of the involved species. It would be better if they had better lives and larger populations instead. So, at least in some views of consequentialism, the ethical good of in vitro meat is reduced from a clear win to possibly even a second best to humane farming.

An analogy can be made with horses, whose population has declined precipitiously from the pre-tractor, pre-car days. Current horses live (I guess) nicer lives than the more work-oriented horses of 1900, but they have much fewer lives. So the current 3 million horses in the US might have lives (say) twice as good as the 25 million horses in the 1920s: the total value has still declined. However, factory farmed animals may have lives that are not worth living, holding negative value. If we assume the about 50 billion chickens in in the world all have lives of value -1 each, then replacing them with in vitro meat would give make the world 50 billion units better. But this could also be achieved by making their lives one unit better (and why stop there? maybe they could get two units more). Whether it matters how many entities are experiencing depends on your approach, as does whether it is an extra value if there is a chicken species around rather than not.

Now, I am not too troubled by this since I think in vitro meat is also very good from a health perspective, a climate perspective, and an existential risk reduction perspective (it is good for space colonization and survival if sunlight is interrupted). But I think most people come to in vitro meat from an ethical angle. And given just that perspective, we should not be too complacent that in the future we will become postagricultural: it may take time, and it might actually not increase total wellfare as much as we expected.

Brewing more than booze

Over on Practical Ethics I blog about how to handle production of opiates from bioengineered yeast.

Over on Practical Ethics I blog about how to handle production of opiates from bioengineered yeast.

The basic problem is that opiates seem to be unusually harmful (rather nasty dependency, social withdrawal and risky methods of administration), yet restricting access looks hard in the long run. I don’t subscribe to the view that mere exposure will turn all people into addicts (it looks like it is a subset of people who are vulnerable), but there is a fair bit of harm here that likely is not outweighed by cheapness and better quality. Yet proposed methods restricting access to the modified yeast are unlikely to work in the long run, and may some bad effects on their own.

My own solution is to recognize that in 10-20 years it will be possible to brew many strong drugs discreetly at home, and that we need to reduce the harm from this by developing other technologies that make them less problematic. It might sound wussy and complex compared to the more easily actionable targets suggested in the article, but I think it has a greater chance of actually reducing harms in the long run than policies that merely delay the broad arrival of microbrew drugs.

Baby interrupted

Francesca Minerva and me have a new paper out: Cryopreservation of Embryos and Fetuses as a Future Option for Family Planning Purposes (Journal of Evolution and Technology – Vol. 25 Issue 1 – April 2015 – pgs 17-30).

Francesca Minerva and me have a new paper out: Cryopreservation of Embryos and Fetuses as a Future Option for Family Planning Purposes (Journal of Evolution and Technology – Vol. 25 Issue 1 – April 2015 – pgs 17-30).

Basically, we analyse the ethics of cryopreserving fetuses, especially as an alternative to abortion. While technologically we do not have any means to bring a separated (yet alone cryopreserved) fetus to term yet, it is not inconceivable that advances in ectogenesis (artificial wombs) or biotechnological production of artificial placentas allowing reinplantation could be achieved. And a cryopreserved fetus would have all the time in the world, just like an adult cryonics patient.

It is interesting to see how many of the standard ethical arguments against abortion fare when dealing with cryopreservation. There is no killing, personhood is not affected, there is no loss of value of the future – just a long delay. One might be concerned that fetuses will not be reinplanted but just left in limbo forever, but clearly this is a better state than being irreversibly aborted: cryopreservation can (eventually) be reversed. I think our paper shows that (regardless of what one thinks of cryonics) the irreversibility is the key ethical issue in abortion.

In the end, it will likely take a long time before this is a viable option. But it seems that there are good reasons to consider cryopreservation and reinplantation of fetuses: animal husbandry, space colonisation, various medical treatments (consider “interrupting” an ongoing pregnancy because the mother needs cytostatic treament), and now this family planning reason.

Crispy embryos

Researchers at Sun Yat-sen University in Guangzhou have edited the germline genome of human embryos (paper). They used the ever more popular CRISPR/Cas9 method to try to modify the gene involved in beta-thalassaemia in non-viable leftover embryos from a fertility clinic.

Researchers at Sun Yat-sen University in Guangzhou have edited the germline genome of human embryos (paper). They used the ever more popular CRISPR/Cas9 method to try to modify the gene involved in beta-thalassaemia in non-viable leftover embryos from a fertility clinic.

As usual there is a fair bit of handwringing, especially since there was a recent call for a moratorium on this kind of thing from one set of researchers, and a more liberal (yet cautious) response from another set. As noted by ethicists, many of the ethical concerns are actually somewhat confused.

That germline engineering can have unpredictable consequences for future generations is as true for normal reproduction. More strongly, somebody making the case that (say) race mixing should be hindred because of unknown future effects would be condemned as a racist: we have overarching reasons to allow people live and procreate freely that morally overrule worries about their genetic endowment – even if there actually were genetic issues (as far as I know all branches of the human family are equally interfertile, but this might just be a historical contingency). For a possible future effect to matter morally it needs to be pretty serious and we need to have some real reason to think it is more likely to happen because of the actions we take now. A vague unease or a mere possibility is not enough.

However, the paper actually gives a pretty good argument for why we should not try this method in humans. They found that the efficiency of the repair was about 50%, but more worryingly that there was off-target mutations and that a similar gene was accidentally modified. These are good reasons not to try it. Not unexpected, but very helpful in that we can actually make informed decisions both about whether to use it (clearly not until the problems have been fixed) and what needs to be investigated (how can it be done well? why does it work worse here than advertised?).

The interesting thing with the paper is that the fairly negative results which would reduce interest in human germline changes is anyway hailed as being unethical. It is hard to make this claim stick, unless one buys into the view that germline changes of human embryos is intrinsically bad. The embryos could not develop into persons and would have been discarded from the fertility clinic, so there was no possible future person being harmed (if one thinks fertilized but non-viable embryos deserve moral protection one has other big problems). The main fear seems to be that if the technology is demonstrated many others will follow, but an early negative result would seem to reduce this slippery slope argument.

I think the real reason people think there is an ethical problem is the association of germline engineering with “designer babies”, and the conditioning that designer babies are wrong. But they can’t be wrong for no reason: there has to be an ethics argument for their badness. There is no shortage of such arguments in the literature, ranging from ideas of the natural order, human dignity, accepting the given, the importance of an open-ended life to issues of equality, just to mention a few. But none of these are widely accepted as slam-dunk arguments that conclusively show designer babies are wrong: each of them also have vigorous criticisms. One can believe one or more of them to be true, but it would be rather premature to claim that settles the debate. And even then, most of these designer baby arguments are irrelevant for the case at hand.

All in all, it was a useful result that probably will reduce both risky and pointless research and focus on what matters. I think that makes it quite ethical.