[Update: I have a paper version of this essay on arXiv:1807.10553, extending and correcting some of the results.]

On Physics Stackexchange billybodega asked the question:

Supposing that the entire Earth was instantaneously replaced with an equal volume of closely packed, but uncompressed blueberries, what would happen from the perspective of a person on the surface?

Supposing that the entire Earth was instantaneously replaced with an equal volume of closely packed, but uncompressed blueberries, what would happen from the perspective of a person on the surface?

Unfortunately the site tends to frown on fun questions like this, so it was in my opinion prematurely closed while I was working out the answer. So here it is, with some extra extensions:

The density of blueberries has been estimated to 625.56 kg/m3, WillO on Stackexchange estimated it to 13% of Earth’s density (5510*0.13=716.3 kg/m3), so assuming it to be around  kg/m3 appears to be reasonable. Blueberry pulp has a density similar to water, 980 to 1050 kg per m3 although this is both temperature dependent and depends on how much solids there are. The difference to the whole berries is due to the air between the berries. Note that these are likely the big, thick-skinned “American” blueberries rather than the small wild thin-skinned blueberries (bilberries) I grew up with; the latter would have higher density due to their smaller size and break far more easily.

kg/m3 appears to be reasonable. Blueberry pulp has a density similar to water, 980 to 1050 kg per m3 although this is both temperature dependent and depends on how much solids there are. The difference to the whole berries is due to the air between the berries. Note that these are likely the big, thick-skinned “American” blueberries rather than the small wild thin-skinned blueberries (bilberries) I grew up with; the latter would have higher density due to their smaller size and break far more easily.

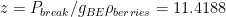

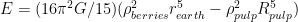

So instantaneously turning Earth into blueberries will reduce its mass to 0.1274 of what it was. Gravity will become correspondingly weaker,  .

.

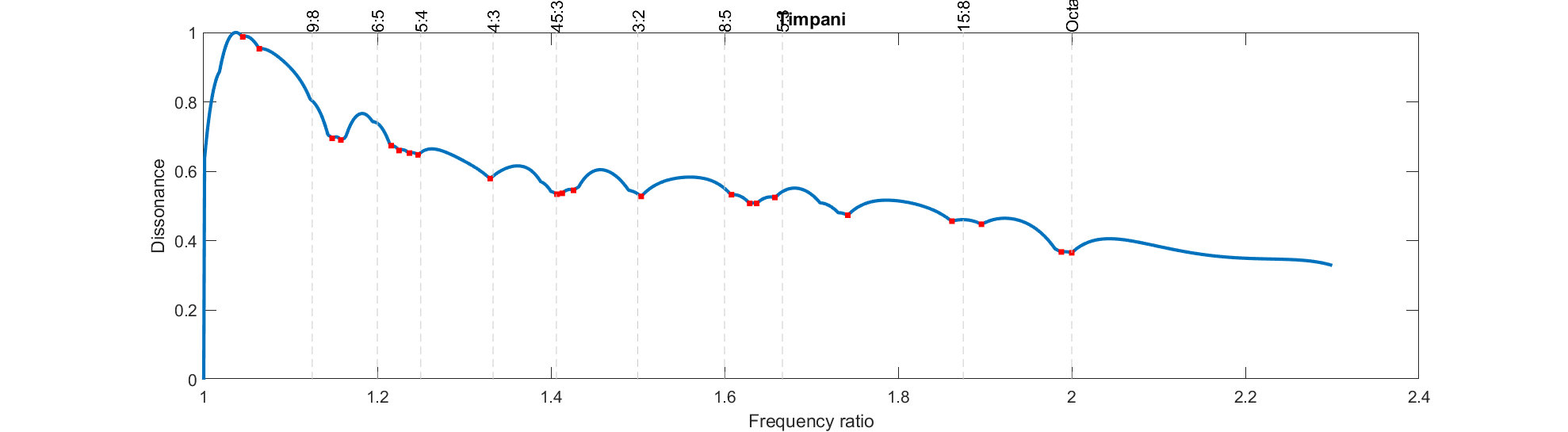

However, blueberries are not particularly sturdy. While there is a literature on blueberry mechanics (of course!), I did not manage to find a great source on their compressive strength. A rough estimate is possible: stacking a sugar cube (1 g) on a berry will not break it, while a milk carton (1 kg) will; 100 g has a decent but not certain chance. So if we assume the blueberry area to be one square centimetre the breaking pressure is on the order of  N/m2. This allows us to estimate at what depth the berries will start to break:

N/m2. This allows us to estimate at what depth the berries will start to break:  m. So while the surface will be free blueberries they will start pulping within a few meters of the surface.

m. So while the surface will be free blueberries they will start pulping within a few meters of the surface.

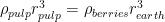

This pulping has an important effect: the pulp separates from the air, coalescing into a smaller sphere. If we assume pulp to be an incompressible fluid, then a sphere of pulp with the same mass as the initial berries will be  , or

, or  . In this case we end up with a planet with 0.8879 times smaller radius (5,657 km), surrounded by a vast atmosphere.

. In this case we end up with a planet with 0.8879 times smaller radius (5,657 km), surrounded by a vast atmosphere.

The freefall timescale for the planet is initially 41 minutes, but relatively shortly the pulping interactions, the air convection etc will slow things down in a complicated way. I expect that the the actual coalescence will take hours, with some late bubbles from the deep interior erupting fairly late.

The gravity on the pulp surface is just 1.5833 m/s2, 16% of normal gravity – almost exactly lunar gravity. This weakens convection currents and the speed with which bubbles move up. The scale height of the atmosphere, assuming the same composition and temperature as on Earth, will be 6.2 times higher. This means that pressure will decline much less with altitude, allowing far thicker clouds and weather systems. As we will see, the atmosphere will puff up more.

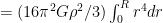

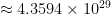

The separation has big consequences. Enormous amounts of air will be pushing out from the pulp as bubbles and jets, producing spectacular geysers (especially since the gravity is low). Even more dramatic is the heating: a lot of gravitational energy is released as the mass is compacted. The total gravitational energy of a constant density sphere of radius R is

![\int_0^R G [4\pi r^2 \rho] [4 \pi r^3 \rho/3] / r dr \int_0^R G [4\pi r^2 \rho] [4 \pi r^3 \rho/3] / r dr](https://s0.wp.com/latex.php?latex=%5Cint_0%5ER+G+%5B4%5Cpi+r%5E2+%5Crho%5D+%5B4+%5Cpi+r%5E3+%5Crho%2F3%5D+%2F+r+dr+&bg=ffffff&fg=000000&s=0)

(the first factor in the integral is the mass of a spherical shell of radius r, the second the mass of the stuff inside, and the third the 1/r gravitational potential). If we ignore the mass of the air since it is small and we just want an order of magnitude estimate, the compression of the berry mass gives energy

J.

J.

This is the energy output of the sun over half an hour, nothing to sneeze at: blueberry earth will become hot. There is about 573,000 J per kg, enough to heat the blueberries from freezing to boiling.

The result is that blueberry earth will turn into a roaring ocean of boiling jam, with the geysers of released air and steam likely ejecting at least a few berries into orbit (escape velocity is just 4.234 km/s, and berries at the initial surface will be even higher up in the potential). As the planet evolves a thick atmosphere of released steam will add to the already considerable air from the berries. It is not inconceivable that the planet may heat up further due to a water vapour greenhouse effect, turning into a very odd Venusian world.

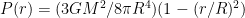

Meanwhile the jam ocean is very deep, and the pressure at depth will be enough to cause the formation of high pressure ice even if it is warm. If the formation process is slow there will be some separation of water into ice and a concentration of other chemicals in the jam ocean, but I suspect the rapid collapse will instead make some kind of composite pulp ice. Ice VII forms above 9 GPa, so if we just use constant gravity this happens at a depth  km, about two-thirds of the radius. This would make up most of the interior. However, gravity is a bit weaker in the interior, so we need to take that into account. The pressure from all the matter above radius r is

km, about two-thirds of the radius. This would make up most of the interior. However, gravity is a bit weaker in the interior, so we need to take that into account. The pressure from all the matter above radius r is  , and the ice core will have radius

, and the ice core will have radius

3,258 km. This is smaller, about 57% of the radius, and just 20% of the total volume.

3,258 km. This is smaller, about 57% of the radius, and just 20% of the total volume.

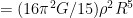

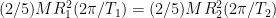

The coalescence will also speed up rotation. The original blueberry earth would of course make one rotation every 24 hours, but the smaller result would have a smaller moment of inertia. The angular momentum conservation gives  , or

, or  , in this case 18.9210 hours. This in turn will increase the oblateness a bit, to approximately 0.038 – an 8.8 times increase over Earth.

, in this case 18.9210 hours. This in turn will increase the oblateness a bit, to approximately 0.038 – an 8.8 times increase over Earth.

Another effect is the orbit of the Moon. Now the two bodies have about equal mass. Is the Moon bound to blueberry earth? A kilogram of lunar material has potential energy  1.6925

1.6925  J, while the kinetic energy is

J, while the kinetic energy is  J – more than enough to escape. Had it remained the jam ocean would have made an excellent tidal dissipation mechanism that would have slowed down rotation and moved blueberry earth towards tidal lock with the moon much earlier than the 50 billion years it would otherwise have taken.

J – more than enough to escape. Had it remained the jam ocean would have made an excellent tidal dissipation mechanism that would have slowed down rotation and moved blueberry earth towards tidal lock with the moon much earlier than the 50 billion years it would otherwise have taken.

So, to sum up, to a person standing on the surface of the Earth when it turns into blueberries, the first effect would be a drastic reduction of gravity. Standing on the blueberries might be possible in theory, except that almost immediately they begin to compress rapidly and air starts erupting everywhere. The effect is basically the worst earthquake ever, and it keeps on going until everything has fallen 714 km. While this is going on everything heats up drastically until the entire environment is boiling jam and steam. The end result is a world that has a steam atmosphere covering an ocean of jam on top of warm blueberry granita.