Yesterday I participated in a round-table discussion with professor Miguel Benasyag about the therapy vs. enhancement distinction at the TransVision 2014 conference. Unfortunately I could not get in a word sidewise, so it was not much of a discussion. So here are the responses I wanted to make, but didn’t get the chance to do: in a way this post is the opposite of l’esprit de l’escalier.

Yesterday I participated in a round-table discussion with professor Miguel Benasyag about the therapy vs. enhancement distinction at the TransVision 2014 conference. Unfortunately I could not get in a word sidewise, so it was not much of a discussion. So here are the responses I wanted to make, but didn’t get the chance to do: in a way this post is the opposite of l’esprit de l’escalier.

Enhancement: top-down, bottom-up, or sideways?

Does enhancements – whether implanted or not – represent a top-down imposition of order on the biosystem? If one accepts that view, one ends up with a dichotomy between that and bottom-up approaches where biosystems are trained or placed in a smart context that produce the desired outcome: unless one thinks imposing order is a good thing, one becomes committed to some form of naturalistic conservatism.

But this ignores something Benasyag brought up himself: the body and brain are flexible and adaptable. The cerebral cortex can reorganize to become a primary cortex for any sense, depending on which input nerve is wired up to it. My friend Todd’s implanted magnet has likely reorganized a small part of his somatosensory cortex to represent his new sense. This enhancement is not a top-down imposition of a desired cortical structure, neither a pure bottom-up training of the biosystem.

Real enhancements integrate, they do not impose a given structure. This also addresses concerns of authenticity: if enhancements are entirely externally imposed – whether through implantation or external stimuli – they are less due to the person using them. But if their function is emergent from the person’s biosystem, the device itself, and how it is being used, then it will function in a unique, personal way. It may change the person, but that change is based on the person.

Complex enhancements

Enhancements are often described as simple, individualistic, atomic, things. But actual enhancements will be systems. A dramatic example was in my ears: since I am both French- and signing-impaired, I could listen to (and respond to) comments thanks to an enhancing system involving three skilled translators, a set of wireless headphones and microphones. This system was not just complex, but it was adaptive (translators know how to improvise, we the users learned how to use it) and social (micro-norms for how to use it emerged organically).

Enhancements need a social infrastructure to function – both a shared, distributed knowledge of how and when to use them (praxis) and possibly a distributed functioning itself. A brain-computer interface is of little use without anybody to talk to. In fact, it is the enhancements that affect communication abilities that are most powerful both in the sense of enhancing cognition (by bringing brains together) and changing how people are socially situated.

Cochlear implants and social enhancement

This aspect of course links to the issues in the adjacent debate about disability. Are we helping children by giving them cochlear implants, or are we undermining a vital deaf cultural community. The unique thing about cochlear implants is that they have this social effect and have to be used early in life for best results. In this case there is a tension between the need to integrate the enhancement with the hearing and language systems in an authentic way, a shift in which social community which will be readily available, and concerns over that this is just used to top-down normalize away the problem of deafness. How do we resolve this?

The value of deaf culture is largely its value to members: there might be some intrinsic value to the culture, but this is true for every culture and subculture. I think it is safe to say there is a fairly broad consensus in western culture today that individuals should not sacrifice their happiness – and especially not be forced to do it – for the sake of the culture. It might be supererogatory: a good thing to do, but not something that can be demanded. Culture is for the members, not the other way around: people are ends, not means.

So the real issue is the social linkages and the normalisation. How do we judge the merits of being able to participate in social networks? One might be small but warm, another vast and mainstream. It seems that the one thing to avoid is not being able to participate in either. But this is not a technical problem as much as a problem of adaptation and culture. Once implants are good enough that learning to use them does not compete with learning signing the real issue becomes the right social upbringing and the question of personal choices. This goes way beyond implant technology and becomes a question of how we set up social adaptation processes – a thick, rich and messy domain where we need to do much more work.

It is also worth considering the next step. What if somebody offered a communications device that would enable an entirely new form of communication, and hence social connection? In a sense we are gaining that using new media, but one could also consider something direct, like Egan’s TAP. As that story suggests, there might be rather subtle effects if people integrate new connections – in his case merely epistemic ones, but one could imagine entirely new forms of social links. How do we evaluate them? Especially since having a few pioneers test them tells us less than for non-social enhancements. That remains a big question.

Justifying off-label enhancement

A somewhat fierce question I got (and didn’t get to respond to) was how I could justify that I occasionally take modafinil, a drug intended for use of narcoleptics.

There seems to be a deontological or intention-oriented view behind the question: the intentions behind making the drug should be obeyed. But many drugs have been approved for one condition and then use expanded to other conditions. Presumably aspirin use for cardiovascular conditions is not unethical. And pharma companies largely intend to make money by making medicines, so the deep intention might be trivial to meet. More generally, claiming the point of drugs is to help sick people (who we have an obligation to help) doesn’t work since there obviously exist drug use for non-sick people (sports medicine, for example). So unless many current practices are deeply unethical this line of argument doesn’t work.

What I think was the real source was the concern that my use somehow deprived a sick person of the use. This is false, since I paid for it myself: the market is flexible enough to produce enough, and it was not the case of splitting a finite healthcare cake. The finiteness case might be applicable if we were talking about how much care me and my neighbours would get for our respective illnesses, and whether they had a claim on my behaviour through our shared healthcare cake. So unless my interlocutor thought my use was likely to cause health problems she would have to pay for, it seems that this line of reasoning fails.

The deep issue is of course whether there is a normatively significant difference between therapy and enhancement. I deny it. I think the goal of healthcare should not be health but wellbeing. Health is just an enabling instrumental thing. And it is becoming increasingly individual: I do not need more muscles, but I do benefit from a better brain for my life project. Yours might be different. Hence there is no inherent reason to separate treatment and enhancement: both aim at the same thing.

That said, in practice people make this distinction and use it to judge what care they want to pay for for their fellow citizens. But this will shift as technology and society changes, and as I said, I do not think this is a normative issue. Political issue, yes, messy, yes, but not foundational.

What do transhumanists think?

One of the greatest flaws of the term “transhumanism” is that it suggests that there is something in particular all transhumanist believe. Benasayag made some rather sweeping claims about what transhumanists (enhancement as embodying body-hate and a desire for control) wanted to do that were most definitely not shared by the actual transhumanists in the audience or stage. It is as problematic as claiming that all French intellectuals believe something: at best a loose generalisation, but most likely utterly misleading. But when you label a group – especially if they themselves are trying to maintain an official label – it becomes easier to claim that all transhumanists believe in something. Outsiders also do not see the sheer diversity inside, assuming everybody agrees on the few samples of writing they have read.

The fault here lies both in the laziness of outside interlocutors and in transhumanists not making their diversity clearer, perhaps by avoiding slapping the term “transhumanism” on every relevant issue: human enhancement is of interest to transhumanists, but we should be able to discuss it even if there were no transhumanists.

I have not been able to dig up the project documentation, but I would be astonished if there was any discussion of risk due to the experiment. After all, cooling things is rarely dangerous. We do not have any physical theories saying there could be anything risky here. No doubt there are risk assessment of liquid nitrogen or helium practical risks somewhere, but no analysis of any basic physics risks.

I have not been able to dig up the project documentation, but I would be astonished if there was any discussion of risk due to the experiment. After all, cooling things is rarely dangerous. We do not have any physical theories saying there could be anything risky here. No doubt there are risk assessment of liquid nitrogen or helium practical risks somewhere, but no analysis of any basic physics risks.

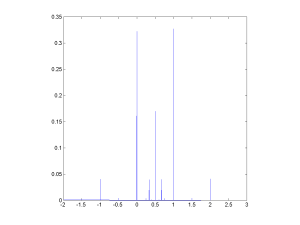

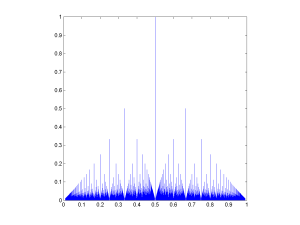

![The rational distribution of two convolved Exp[0.1] distributions.](http://aleph.se/andart2/wp-content/uploads/2014/09/trifonovexp01-300x225.png)

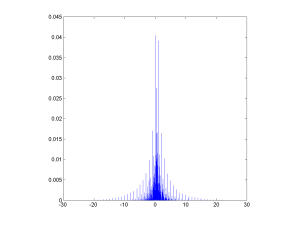

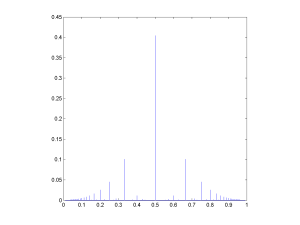

![Rational distribution of ratio between a Poisson[10] and a Poisson[5] variable.](http://aleph.se/andart2/wp-content/uploads/2014/09/trifonovpoiss105-300x225.png)