A great question from twitter:

Food for thought: There exists some smallest whole number which no human will ever think about.

I wonder how big it is.

— Grant Sanderson (@3blue1brown) January 6, 2020

This is a bit like the “what is the smallest uninteresting number?” paradox, but not paradoxical: we do not have to name it (and hence make it thought about/interesting) to reason about it.

I will first give a somewhat rough probabilistic bound, and then a much easier argument for the scale of this number. TL;DR: the number is likely smaller than .

Probabilistic bound

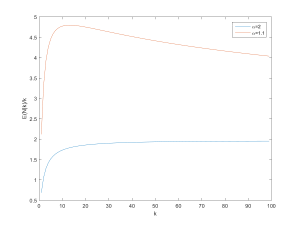

If we think about numbers with frequencies

,

approaches some probability distribution

. To simplify things we assume

is a decreasing function of

; this is not strictly true (see below) but likely good enough.

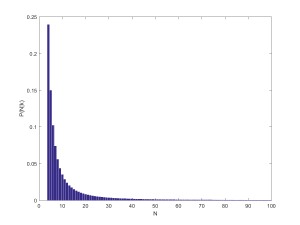

If we denote the cumulative distribution function we can use the k:th order statistics to calculate the distribution of the maximum of the numbers:

. We are interested in the point where it becomes is likely that the number

has not come up despite the trials, which is somewhere above the median of the maximum:

.

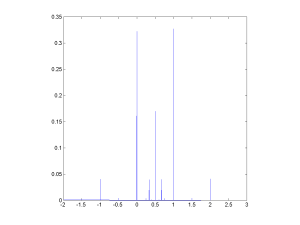

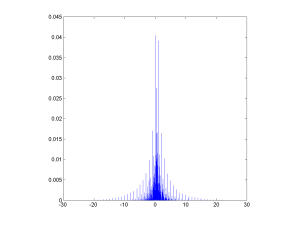

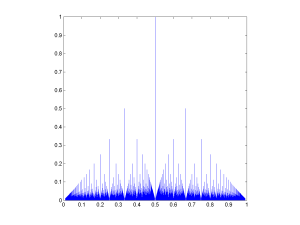

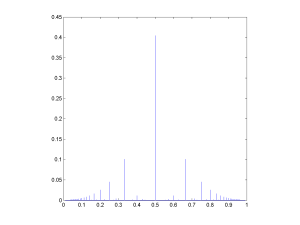

What shape does have? (Dorogovtsev, Mendes, Oliveira 2005) investigated numbers online and found a complex, non-monotonic shape. Obviously dates close to the present are overrepresented, as are prices (ending in .99 or .95), postal codes and other patterns. Numbers in exponential notation stretch very far up. But mentions of explicit numbers generally tend to follow

, a very flat power-law.

So if we have uses we should expect roughly

since much larger x are unlikely to occur even once in the sample. We can hence normalize to get

. This gives us

, and hence

. The median of the maximum becomes

. We are hence not entirely bumping into the

ceiling, but we are close – a more careful argument is needed to take care of this.

So, how large is $k$ today? Dorogovtsev et al. had on the order of , but that was just searchable WWW pages back in 2005. Still, even those numbers contain numbers that no human ever considered since many are auto-generated. So guessing

is likely not too crazy. So by this argument, there are likely 24 digit numbers that nobody ever considered.

Consider a number…

Another approach is to assume each human considers a number about once a minute throughout their lifetime (clearly an overestimate given childhood, sleep, innumeracy etc. but we are mostly interested in orders of magnitude anyway and making an upper bound) which we happily assume to be about a century, giving a personal across a life as about

. There has been about 100 billion people, so humanity has at most considered

numbers. This would give an estimate using my above formula as

.

But that assumes “random” numbers, and is a very loose upper bound, merely describing a “typical” small unconsidered number. Were we to systematically think through the numbers from 1 and onward we would have the much lower . Just 19 digits!

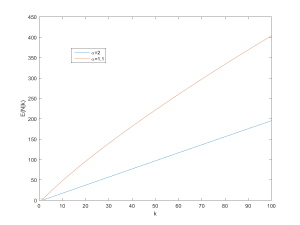

One can refine this a bit: if we have time and generate new numbers at a rate

per second, then

and we will at most get

numbers. Hence the smallest number never considered has to be at most

.

Seth Lloyd estimated that the observable universe cannot have performed more than operations on

bits. If each of those operations was a consideration of a number we get a bound on the first unconsidered number as

.

This can be used to consider the future too. Computation of our kind can continue until proton decay in years or so, giving a bound of

if we use Lloyd’s formula. That one uses the entire observable universe; if we instead consider our own future light cone the number is going to be much smaller.

But the conclusion is clear: if you write a 173 digit number with no particular pattern of digits (a bit more than two standard lines of typing), it is very likely that this number would never have shown up across the history of the entire universe except for your action. And there is a smaller number that nobody – no human, no alien, no posthuman galaxy brain in the far future – will ever consider.

![The rational distribution of two convolved Exp[0.1] distributions.](http://aleph.se/andart2/wp-content/uploads/2014/09/trifonovexp01-300x225.png)

![Rational distribution of ratio between a Poisson[10] and a Poisson[5] variable.](http://aleph.se/andart2/wp-content/uploads/2014/09/trifonovpoiss105-300x225.png)