Introduction

Introduction

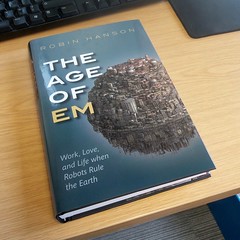

Robin Hanson’s The Age of Em is bound to be a classic.

It might seem odd, given that it is both awkward to define what kind of book it is – economics textbook, future studies, speculative sociology, science fiction without any characters? – and that most readers will disagree with large parts of it. Indeed, one of the main reasons it will become classic is that there is so much to disagree with and those disagreements are bound to stimulate a crop of blogs, essays, papers and maybe other books.

This is a very rich synthesis of many ideas with a high density of fascinating arguments and claims per page just begging for deeper analysis and response. It is in many ways like an author’s first science fiction novel (Zindell’s Neverness, Rajaniemi’s The Quantum Thief, and Egan’s Quarantine come to mind) – lots of concepts and throwaway realizations has been built up in the background of the author’s mind and are now out to play. Later novels are often better written, but first novels have the freshest ideas.

The second reason it will become classic is that even if mainstream economics or futurism pass it by, it is going to profoundly change how science fiction treats the concept of mind uploading. Sure, the concept has been around for ages, but this is the first through treatment of what it means to a society. Any science fiction treatment henceforth will partially define itself by how it relates to the Age of Em scenario.

Plausibility

The Age of Em is all about the implications of a particular kind of software intelligence, one based on scanning human brains to produce intelligent software entities. I suspect much of the debate about the book will be more about the feasibility of brain emulations. To many people the whole concept sparks incredulity and outright rejection. The arguments against brain emulation range from pure arguments of incredulity (“don’t these people know how complex the brain is?”) over various more or less well-considered philosophical positions (“don’t these people read Heidegger?” to questioning the inherent functionalist reductionism of the concept) to arguments about technological feasibility. Given that the notion is one many people will find hard to swallow I think Robin spent too little effort bolstering the plausibility, making the book look a bit too much like what Nordmann called if-then ethics: assume some outrageous assumption, then work out the conclusion (which Nordmann finds a waste of intellectual resources). I think one can make fairly strong arguments for the plausibility, but Robin is more interested in working out the consequences. I have a feeling there is a need now for a good defense of the plausibility (this and this might be a weak start, but much more needs to be done).

Scenarios

In this book, I will set defining assumptions, collect many plausible arguments about the correlations we should expect from these assumptions, and then try to combine these many correlation clues into a self-consistent scenario describing relevant variables.

What I find more interesting is Robin’s approach to future studies. He is describing a self-consistent scenario. The aim is not to describe the most likely future of all, nor to push some particular trend the furthest it can go. Rather, he is trying to describe what, given some assumptions, is likely to occur based on our best knowledge and fits with the other parts of the scenario into an organic whole.

The baseline scenario I generate in this book is detailed and self-consistent, as scenarios should be. It is also often a likely baseline, in the sense that I pick the most likely option when such an option stands out clearly. However, when several options seem similarly likely, or when it is hard to say which is more likely, I tend instead to choose a “simple” option that seems easier to analyze.

This baseline scenario is a starting point for considering variations such as intervening events, policy options or alternatives, intended as the center of a cluster of similar scenarios. It typically is based on the status quo and consensus model: unless there is a compelling reason elsewhere in the scenario, things will behave like they have done historically or according to the mainstream models.

As he notes, this is different from normal scenario planning where scenarios are generated to cover much of the outcome space and tell stories of possible unfoldings of events that may give the reader a useful understanding of the process leading to the futures. He notes that the self-consistent scenario seems to be taboo among futurists.

Part of that I think is the realization that making one forecast will usually just ensure one is wrong. Scenario analysis aims at understanding the space of possibility better: hence they make several scenarios. But as critics of scenario analysis have stated, there is a risk of the conjunction fallacy coming into play: the more details you add to the story of a scenario the more compelling the story becomes, but the less likely the individual scenario. The scenario analyst respond by claiming individual scenarios should not be taken as separate things: they only make real sense as part of the bigger structure. The details are to draw the reader into the space of possibility, not to convince them that a particular scenario is the true one.

Robin’s maximal consistent scenario is not directly trying to map out an entire possibility space but rather to create a vivid prototype residing somewhere in the middle of it. But if it is not a forecast, and not a scenario planning exercise, what is it? Robin suggest it is a tool for thinking about useful action:

The chance that the exact particular scenario I describe in this book will actually happen just as I describe it is much less than one in a thousand. But scenarios that are similar to true scenarios, even if not exactly the same, can still be a relevant guide to action and inference. I expect my analysis to be relevant for a large cloud of different but similar scenarios. In particular, conditional on my key assumptions, I expect at least 30% of future situations to be usefully informed by my analysis. Unconditionally, I expect at least 10%.

To some degree this is all a rejection of how we usually think of the future in “far mode” as a neat utopia or dystopia with little detail. Forcing the reader into “near mode” changes the way we consider the realities of the scenario (compare to construal level theory). It makes responding to the scenario far more urgent than responding to a mere possibility. The closest example I know is Eric Drexler’s worked example of nanotechnology in Nanosystems and Engines of Creation.

Again, I expect much criticism quibbling about whether the status quo and mainstream positions actually fit Robin’s assumptions. I have a feeling there is much room for disagreement, and many elements presented as uncontroversial will be highly controversial – sometimes to people outside the relevant field, but quite often to people inside the field too (I am wondering about the generalizations about farmers and foragers). Much of this just means that the baseline scenario can be expanded or modified to include the altered positions, which could provide useful perturbation analysis.

It may be more useful to start from the baseline scenario and ask what the smallest changes are to the assumptions that radically changes the outcome (what would it take to make lives precious? What would it take to make change slow?) However, a good approach is likely to start by investigating robustness vis-à-vis plausible “parameter changes” and use that experience to get a sense of the overall robustness properties of the baseline scenario.

Beyond the Age of Em

But is this important? We could work out endlessly detailed scenarios of other possible futures: why this one? As Robin argued in his original paper, while it is hard to say anything about a world with de novo engineered artificial intelligence, the constraints of neuroscience and economics make this scenario somewhat more predictable: it is a gap in the mist clouds covering the future, even if it is a small one. But more importantly, the economic arguments seem fairly robust regardless of sociological details: copyable human/machine capital is economic plutonium (c.f. this and this paper). Since capital can almost directly be converted into labor, the economy will likely grow radically. This seems to be true regardless of whether we talk about ems or AI, and is clearly a big deal if we think things like the industrial revolution matter – especially a future disruption of our current order.

In fact, people have already criticized Robin for not going far enough. The age described may not last long in real-time before it evolves into something far more radical. As Scott Alexander pointed out in his review and subsequent post, an “ascended economy” where automation and on-demand software labor function together can be a far more powerful and terrifying force than merely a posthuman Malthusian world. It could produce some of the dystopian posthuman scenarios envisioned in Nick Bostrom’s “The future of human evolution“, essentially axiological existential threats where what gives humanity value disappears.

We do not yet have good tools for analyzing this kind of scenarios. Mainstream economics is busy with analyzing the economy we have, not future models. Given that the expertise to reason about the future of a domain is often fundamentally different from the expertise needed in the domain, we should not even assume economists or other social scientists to be able to say much useful about this except insofar they have found reliable universal rules that can be applied. As Robin likes to point out, there are far more results of that kind in the “soft sciences” than outsiders believe. But they might still not be enough to constrain the possibilities.

Yet it would be remiss not to try. The future is important: that is where we will spend the rest of our lives.

If the future matters more than the past, because we can influence it, why do we have far more historians than futurists? Many say that this is because we just can’t know the future. While we can project social trends, disruptive technologies will change those trends, and no one can say where that will take us. In this book, I’ve tried to prove that conventional wisdom wrong.

Warren Ellis’ Normal is a little story about the problem of being serious about the future.

Warren Ellis’ Normal is a little story about the problem of being serious about the future.