Wednesday August 10 1960

Wednesday August 10 1960

Robert lit his pipe while William meticulously set the coordinates from the computer printout. “Want to bet?”

William did not look up from fine-tuning the dials and re-checking the flickering oscilloscope screen. “Five dollars that we get something.”

“’Something’ is not going to be enough to make Edward or the General happy. They want the future on film.”

“If we get more delays we can always just step out with a camera. We will be in the future already.”

“Yeah, and fired.”

“I doubt it. This is just a blue-sky project Ed had to try because John and Richard’s hare-brained one-electron idea caught the eye of the General. It will be like the nuclear mothball again. There, done. You can start it.”

Robert put the pipe in the ashtray and walked over to the Contraption controls. He noted down the time and settings in the log, then pressed the button. “Here we go.” The Contraption hummed for a second, the cameras clicked. “OK, you bet we got something. You develop the film.”

“We got something!” William was exuberant enough to have forgotten the five dollars. He put down the still moist prints on Robert’s desk. Four black squares. He thrust a magnifying glass into Robert’s hands and pointed at a corner. “Recognize it?”

It took Robert a few seconds to figure out what he was looking at. First he thought there was nothing there but noise, then eight barely visible dots became a familiar shape: Orion. He was seeing a night sky. In a photo taken inside a basement lab. During the day.

“Well… that is really something.”

Tuesday August 16 1960

The next attempt was far more meticulous. William had copied the settings from the previous attempt, changed them slightly in the hope of a different angle, and had Raymond re-check it all on the computer despite the cost. This time they developed the film together. As the seal of the United States of America began to develop on the film they both simultaneously turned to each other.

“Am I losing my mind?”

“That would make two of us. Look, there is text there. Some kind of plaque…”

The letters gradually filled in. “THIS PLAQUE COMMEMORATES THE FIRST SUCCESSFUL TRANSCHRONOLOGICAL OBSERVATION August 16 1960 to July 12 2054.” Below was more blurry text.

“Darn, the date is way off…”

“What do you mean? That is today’s date.”

“The other one. Theory said it should be a month in the future.”

“Idiot! We just got a message from the goddamn future! They put a plaque. In space. For us.”

Wednesday 14 December 1960

The General was beaming. “Gentlemen, you have done your country a great service. The geographic coordinates on Plaque #2 contained excellent intel. I am not at liberty to say what we found over there in Russia, but this project has already paid off far beyond expectation. You are going to get medals for this.” He paused and added in a lower voice: “I am relieved. I can now go to my grave knowing that the United States is still kicking communist butt 90 years in the future.”

One of the general’s aides later asked Robert: “There is something I do not understand, sir. How did the people in the future know where to put the plaques?”

Robert smiled. “That bothered us too for a while. Then we realized that it was the paperwork that told them. You guys have forced us to document everything. Just saying, it is a huge bother. But that also meant that every setting is written down and archived. Future people with the right clearances can just look up where we looked.”

“And then go into space and place a plaque?”

“Yes. Future America is clearly spacefaring. The most recent plaques also contain coordinate settings for the next one, in addition to the intel.”

He did not mention the mishap. When they entered the coordinates for Plaque #4 given on Plaque #3, William had made a mistake – understandable, since the photo was blurry – and they photographed the wrong spacetime spot. Except that Plaque #4 was there. It took them a while to realize that what mattered was what settings they entered into the archive, not what the plaque said.

“They knew where we would look.” Robert had said with wonder.

“Why did they put in different coordinates on #3 then? We could just set random coordinates and they will put a plaque there.”

“Have a heart. I assume that would force them to run around the entire solar system putting plaques in place. Theory says the given coordinates are roughly in Earth’s vicinity – more convenient for our hard-working future astronauts.”

“You know, we should try putting the wrong settings into the archive.”

“You do that if the next plaque is a dud.”

Friday 20 January 1961

Still, something about the pattern bothered Robert. The plaques contained useful information, including how to make a better camera and electronics. The General was delighted, obviously thinking of spy satellites not dependent on film cannisters. But there was not much information about the world: if he had been sending information back to 1866, wouldn’t he have included some modern news clippings, maybe a warning about stopping that Marx guy?

Suppose things did not go well in the future. The successors of that shoe-banging Khrushchev somehow won and instituted their global dictatorship. They would pore over the remaining research of the formerly free world, having their minions squeeze every creative idea out the archives. Including the coordinates for the project. Then they could easily fake messages from a future America to fool his present, maybe even laying the groundwork for their success…

William was surprisingly tricky to convince. Robert had assumed he would be willing to help with the scheme just because it was against the rules, but he had been at least partially taken in by the breath-taking glory of the project and the prospect of his own future career. Still, William was William and could not resist a technical challenge. Setting up an illicit calculation on the computer disguised as an abortive run with a faulty set of punch cards was just his kind of thing. He had always loved cloak-and-dagger stuff. Robert made use of the planned switch to the new cameras to make the disappearance of one roll of film easy to overlook. The security guards knew both of them worked on ungodly hours.

“Want to bet?” William asked.

“Bet what? That we will see a hammer and sickle across the globe?”

“Something simpler: that there will be a plaque saying ‘I see you peeping!’.”

Robert shivered. “No thanks. I just want to be reassured.”

“It is a shame we can’t get better numerical resolution; if we are lucky we will just see Earth. Imagine if we could get enough decimal places to put the viewport above Washington DC.”

The photo was beautiful. Black space, and slightly off-centre there was a blue and white marble. Robert realized that they were the first people ever to see the entire planet from this distance. Maybe in a decade or so, a man on the moon would actually see it like that. But the planet looked fine. Was there maybe glints of something in orbit?

“Glad I did not make the bet with you. No plaque.”

“The operational security of the future leaves a bit to be desired.”

“…that is actually a good answer.”

“What?”

“Imagine you are running Future America, and have a way of telling the past about important things. Like whatever was in Russia, or whatever is in those encrypted sequences on Plaque #9. Great. But Past America can peek at you, and they don’t have all the counterintelligence gadgets and tricks you do. So if they peek at something sensitive – say the future plans for a super H-bomb – then the Past Commies might steal it from you.”

“So the plaques are only giving us what we need, or is safe if there are spies in the project.”

“Future America might even do a mole-hunt this way… But more importantly, you would not want Past America to watch you too freely since that might leak information to not just our adversaries or the adversaries of Future America, but maybe mid-future adversaries too.”

“You are reading too many spy novels.”

“Maybe. But I think we should not try peeking too much. Even if we know we are trustworthy, I have no doubt there are some sticklers in the project – now or in the future – who are paranoid.”

“More paranoid than us? Impossible. But yes.”

With regret Robert burned the photo later that night.

February 1962

As the project continued its momentum snowballed and it became ever harder to survey. Manpower was added. Other useful information was sent back – theory, technology, economics, forecasts. All benign. More and more was encrypted. Robert surmised that somebody simply put the encryption keys in the archive and let the future send things back securely to the right recipients.

His own job was increasingly to run the work on building a more efficient “Conduit”. The Contraption would retire in favour of an all-electronic version, all integrated circuits and rapid information flow. It would remove the need for future astronauts to precisely place plaques around the solar system: the future could send information as easily as using ComLogNet teletype terminals.

William was enthusiastically helping the engineers implement the new devices. He seemed almost giddy with energy as new tricks arrived weekly and wonders emerged from the workshop. A better camera? Hah, the new computers were lightyears ahead of anything anybody else had.

So why did Robert feel like he was being fooled?

Wednesday 28 February 1962

In a way this was a farewell to the Contraption around which his life had circulated the past few years: tomorrow the Conduit would take over upstairs.

Robert quietly entered the coordinates into the controls. This time he had done most of the work himself: he could run jobs on the new mainframe and the improved algorithms Theory had worked out made a huge difference.

It was also perhaps his last chance to actually do things himself. He had found himself increasingly insulated as a manager – encapsulated by subordinates, regulations, and schedules. The last time he had held a soldering iron was months ago. He relished the muggy red warmth of the darkroom as he developed the photos.

The angles were tilted, but the photos were more unexpected than he had anticipated. One showed what he thought was in the DC region but the whole area was an empty swampland dotted with overgrown ruins. New York was shrouded in a thunderstorm, but he could make out glowing skyscrapers miles high shedding von Kármán vortices in the hurricane strength winds. One photo showed a gigantic structure near the horizon that must have been a hundred kilometres tall, surmounted by an aurora. This was not a communist utopia. Nor was it the United States in any shape or form. It was not a radioactive wasteland – he was pretty sure at least one photo showed some kind of working rail line. This was an alien world.

When William put his hand on his shoulder Robert nearly screamed.

“Anything interesting?”

Wordlessly he showed the photos to William, who nodded. “Thought so.”

“What do you mean?”

“When do you think this project will end?”

Robert gave it some thought. “I assume it will run as long as it is useful.”

“And then what? It is not like we would pack up the Conduit and put it all in archival storage.”

“Of course not. It is tremendously useful.”

“Future America still has the project. They are no doubt getting intel from further down the line. From Future Future America.”

Robert saw it. A telescoping series of Conduits shipping choice information from further into the future to the present. Presents. Some of which would be sending it further back. And at the futuremost end of the series…

“I read a book where they discussed progress, and the author suggested that all of history is speeding up towards some super-future. The Contraption and Conduit allows the super-future to come here.”

“It does not look like there are any people in the super-future.”

“We have been evolving for millions of years, slowly. What if we could just cut to the chase?”

“Humanity ending up like that?” He gestured towards Thunder New York.

“I think that is all computers. Maybe electronic brains are the inhabitants of the future.”

“We must stop it! This is worse than commies. Russians are at least human. We must prevent the Conduit…”

William smiled broadly. “That won’t happen. If you blew up the Conduit, don’t you think there would be a report? A report archived for the future? And if you were Future America, you would probably send back an encrypted message addressed to the right person saying ‘Talk Robert out of doing something stupid tonight’? Even better, a world where someone gets your head screwed on straight, reports accurately about it, and the future sends back a warning to the person is totally consistent.”

Robert stepped away from William in horror. The red gloom of the darkroom made him look monstrous. “You are working for them!”

“Call it freelancing. I get useful tips, I do my part, things turn out as they should. I expect a nice life. But there is more to it than that, Robert. I believe in moral progress. I think those things in your photos probably know better than we do – yes, they are probably more alien than little green men from Mars, but they have literally eons of science, philosophy and whatever comes after that.”

“Mice.”

“Mice?”

“MICE: Money, Ideology, Coercion, Ego. The formula for recruiting intelligence assets. They got you with all but the coercion part.”

“They did not have to. History, or rather physical determinism, coerces us. Or, ‘you can’t fight fate’.”

“I’m doing this to protect free will! Against the Nazis. The commies! Your philosophers!”

“Funny way you are protecting it. You join this organisation, you allow yourself to become a cog in the machine, feel terribly guilty about your little experiments. No, Robert, you are protecting your way of life. You are protecting normality. You could just as well have been in Moscow right now working to protect socialism.”

“Enough! I am going to stop the Conduit!”

William held up a five dollar bill. “Want to bet?”

.

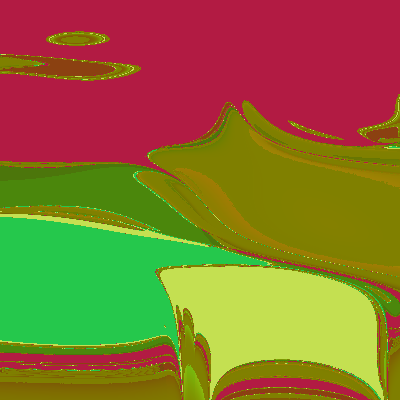

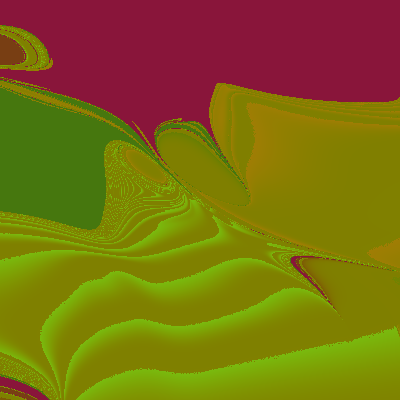

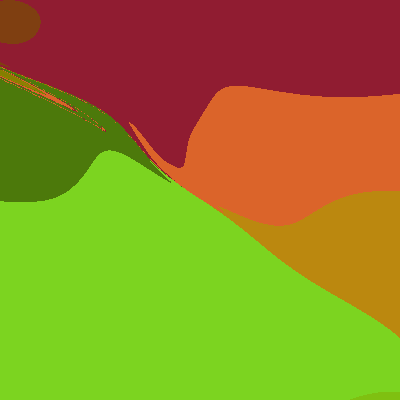

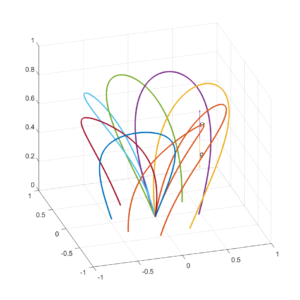

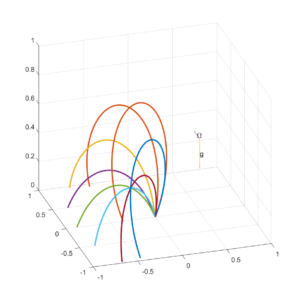

numbers with frequencies

,

approaches some probability distribution

. To simplify things we assume

is a decreasing function of

; this is not strictly true (see below) but likely good enough.

we can use the k:th order statistics to calculate the distribution of the maximum of the numbers:

. We are interested in the point where it becomes is likely that the number

has not come up despite the trials, which is somewhere above the median of the maximum:

.

have? (Dorogovtsev, Mendes, Oliveira 2005) investigated numbers online and found a complex, non-monotonic shape. Obviously dates close to the present are overrepresented, as are prices (ending in .99 or .95), postal codes and other patterns. Numbers in exponential notation stretch very far up. But mentions of explicit numbers generally tend to follow

, a very flat power-law.

uses we should expect roughly

since much larger x are unlikely to occur even once in the sample. We can hence normalize to get

. This gives us

, and hence

. The median of the maximum becomes

. We are hence not entirely bumping into the

ceiling, but we are close – a more careful argument is needed to take care of this.

, but that was just searchable WWW pages back in 2005. Still, even those numbers contain numbers that no human ever considered since many are auto-generated. So guessing

is likely not too crazy. So by this argument, there are likely 24 digit numbers that nobody ever considered.

across a life as about

. There has been about 100 billion people, so humanity has at most considered

numbers. This would give an estimate using my above formula as

.

. Just 19 digits!

and generate new numbers at a rate

per second, then

and we will at most get

numbers. Hence the smallest number never considered has to be at most

.

operations on

bits. If each of those operations was a consideration of a number we get a bound on the first unconsidered number as

.

years or so, giving a bound of

if we use Lloyd’s formula. That one uses the entire observable universe; if we instead consider our own future light cone the number is going to be much smaller.